a collection of interesting things I've worked on and learned from :)

the idea for sprout came when I was sitting in my backyard over winter break, staring at a parched garden bed that hadn't been watered since I first left for college.

both of my parents have mobility challenges which make keeping our garden healthy difficult. so why not make a robot do it?

this turned into my team's project for Treehacks, Stanford's annual hackathon. originally, we thought a rover would be best, but quickly realized power demands made supplies prohibitively expensive. motion had to be bounded, which felt like a natural use case for a 3d-printer-style gantry.

after making some rough sketches and dimensioning everything out, we decided to full-send the only implementation that was financially feasible. with less than a week left, we spent 300 dollars on 2 industrial linear actuators on Ebay, courtesy of some sketchy guy from Fresno:

our final idea was ambitious but manageable: embed soil and temperature sensors in a dirt plot, feed that data to a 4B 4bit model edge-deployed on a Jetson Nano, and give the model actionable observability over the plot with a mounted camera and water pump.

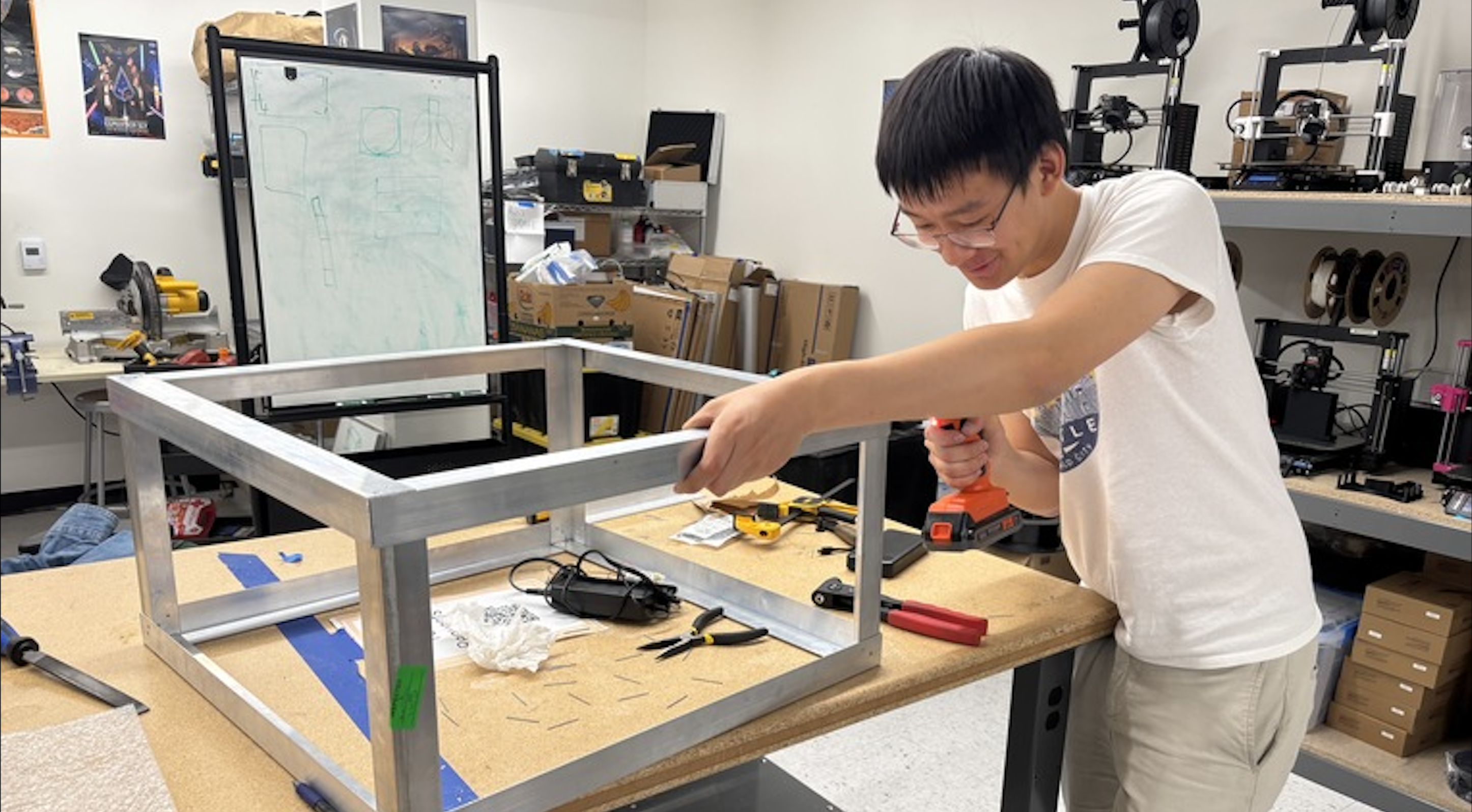

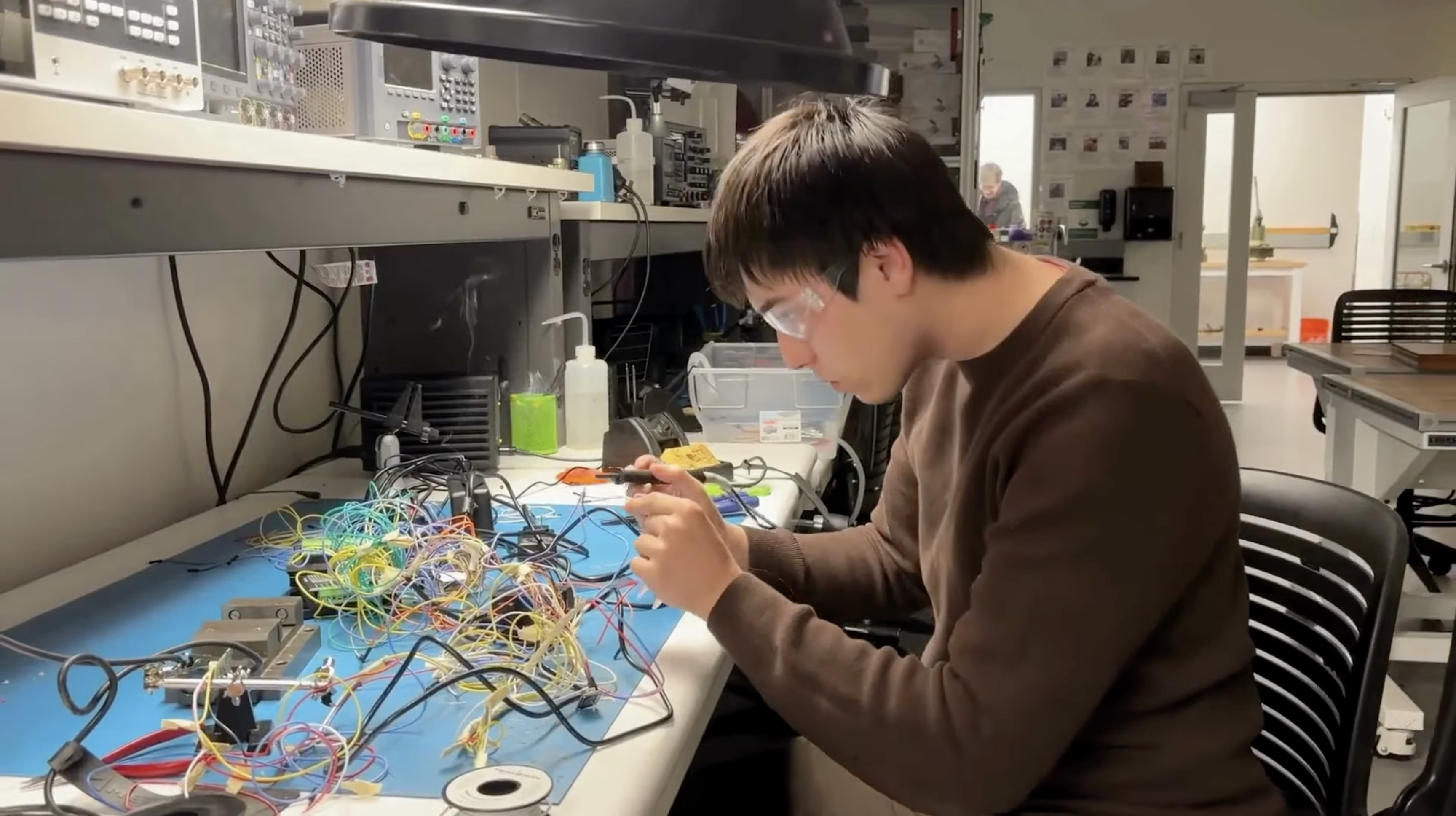

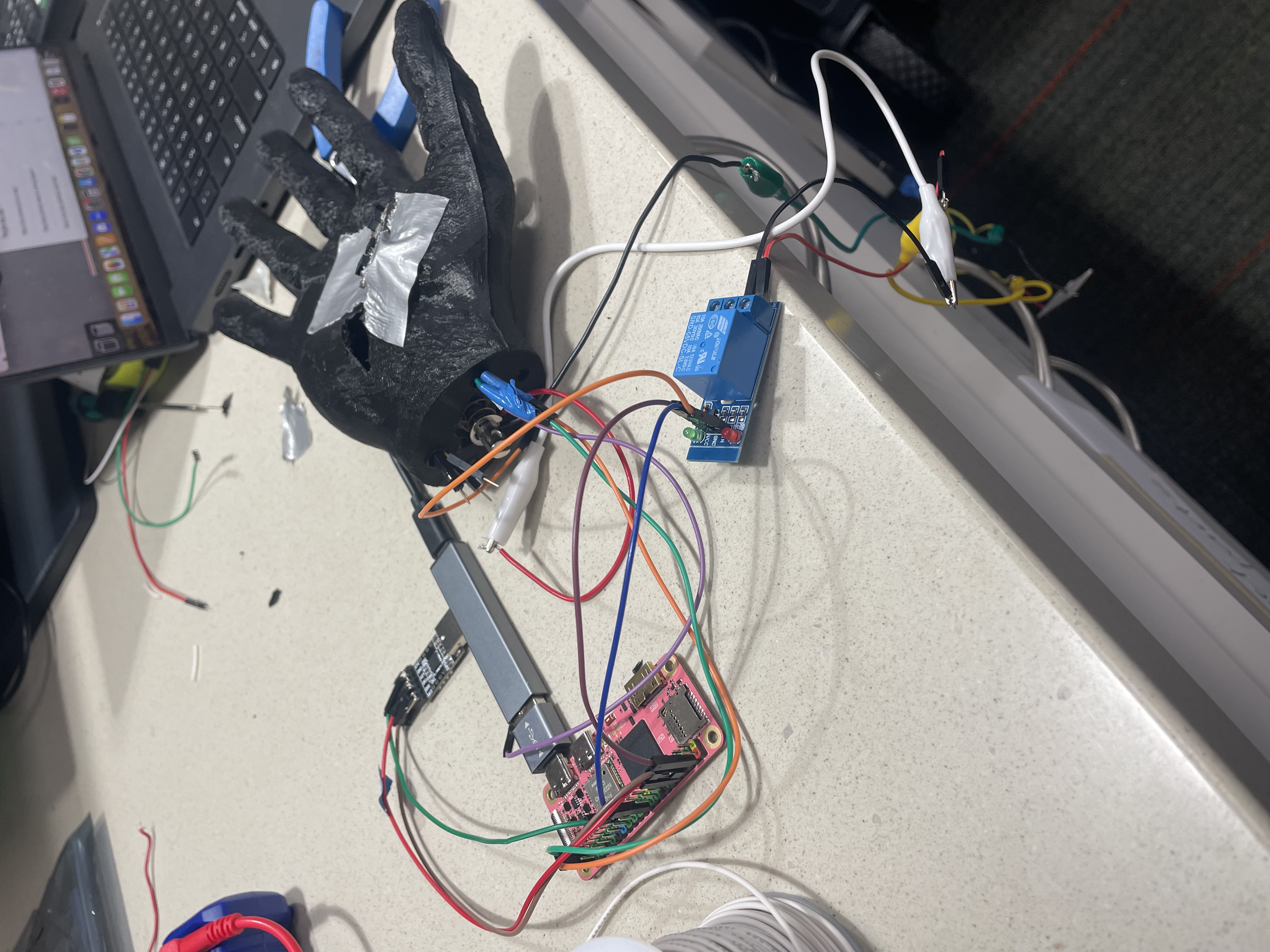

we only had 36 hours to put everything together, and that meant needing to work in parallel. luckily, I was working with a dream team of MechE and CS guys who riveted aluminum and trained models while I soldered:

on the wiring side, we faced lots of unexpected challenges. first, the motors that came with the actuators didn't match spec, meaning I had to manually figure out torque and wiring on the fly. second, everything had to be kept isolated from the pumps and tubing, and we had to operate under the assumption that wiring could get wet at any moment. to minimize risk as best we could, we shrink-wrapped all soldering joints - a time-intensive but necessary step. finally, there was the preplanning: as the guy in charge of overall system design, I had to preorder any components we needed to ensure everything operated on the proper logic level and had the right drivers. in the end, this meant needing 3 separate power supplies: a high-current one for the actuator motors, and two separate supplies for the jetson, sensors, pumps, and other controllers.

even though we didn't have a working prototype until 20 minutes before the deadline, our demo went by without any issues. see a bit of it here:

despite being our first ever hackathon, we were winners of Nvidia's Edge AI track out of more than 200 teams (shoutout to Nvidia and ASUS for the DGX Spark, Jetson, monitor, and HQ visit :)). we had zero expectations coming in, and we were all incredibly surprised by what we could get done in less than 2 days of work.

after the awards ceremony, our team ran a final tally: Markus ran 19 Cursor instances at once, Eric went 42 hours without sleep, and I soldered for 17 hours straight. none of us would have had it any other way.

learn more about sprout here!

want to beat the bot? play here!

every thursday evening, my chinese roommates and I play 5/10/K: a strategy-based variant of the classic card game Dou Dizhu (斗地主) . almost every thursday evening, I lose significantly more games than I win.

before long, we were debating whether a bot could help me be more competitive at the game. though Dou Dizhu game engines existed, none existed for 5/10/K, even though the game has more than 50 million players in China.

eventually, that turned into a bet: I had until the end of winter quarter to make a policy capable of beating human players.

5/10/K is easy to learn informally, but distilling game mechanics down to a single strategy is harder to implement precisely. legal actions depend heavily on the current board state, and much of the game's value is decided in the endgame.

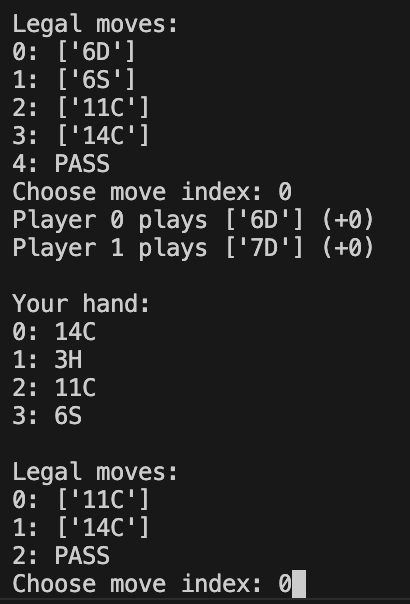

before I could even think about training an agent, I first had to simulate the game environment, since there was no existing implementation anywhere online. that meant writing the ruleset from scratch, representing actions, and encoding them in a way that a policy could actually train on. a snippet from a playtest:

because players don't have access to total information, and because so much of the reward in 5/10/K depends on realtime utility tradeoffs, the policy had to learn under uncertainty. getting this right took several different architectures and reward function iterations, including a few diastrous runs that used aggressively misaligned reward shaping. eventually, using PPO proved to be the best option; Q-learning tended to be too greedy since it learned action values that incentivised shedding point cards early.

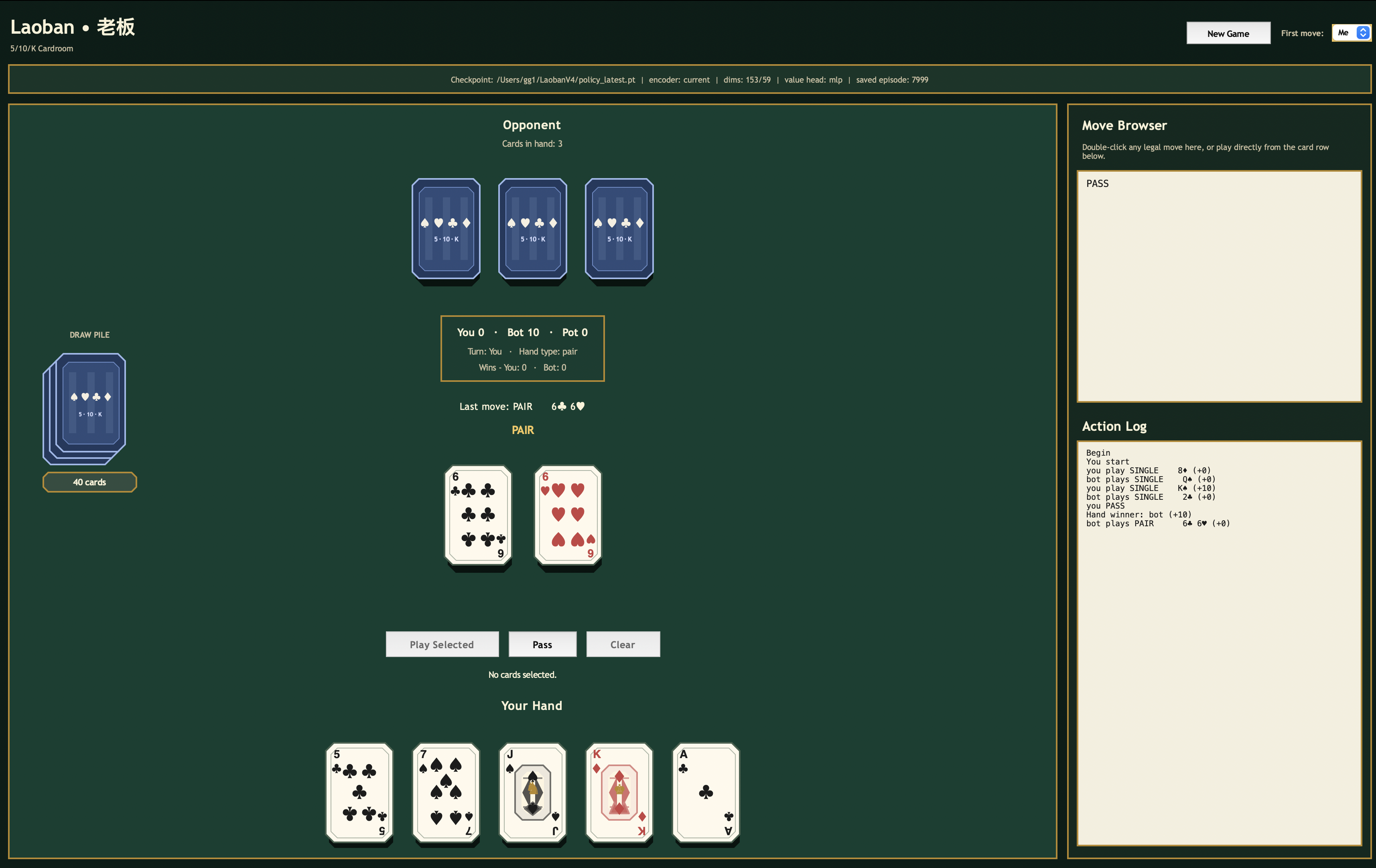

once the policy was stable enough, I built a rough GUI to playtest. so far, it's fairly robust; it's about even with my roommates (and beats me 9 times out of 10).

in short: I shot Deepmind researchers and Apple's head of design (with their consent).

in late 2025, some friends and I got access to Pupper, Stanford's open-source robot dog. as a die-hard Warriors fan, I thought it'd be awesome to teach Pupper the basics of shooting a basketball.

our original idea was to mount a Nerf gun to Pupper's back, use a YOLO model to recognize the hoop, pitch, and fire. however, we quickly realized there was no commercially available Nerf gun that could be electronically controlled and within our size requirements.

we had to build our own. as far as we knew, this hadn't been done on a hobby quadruped.

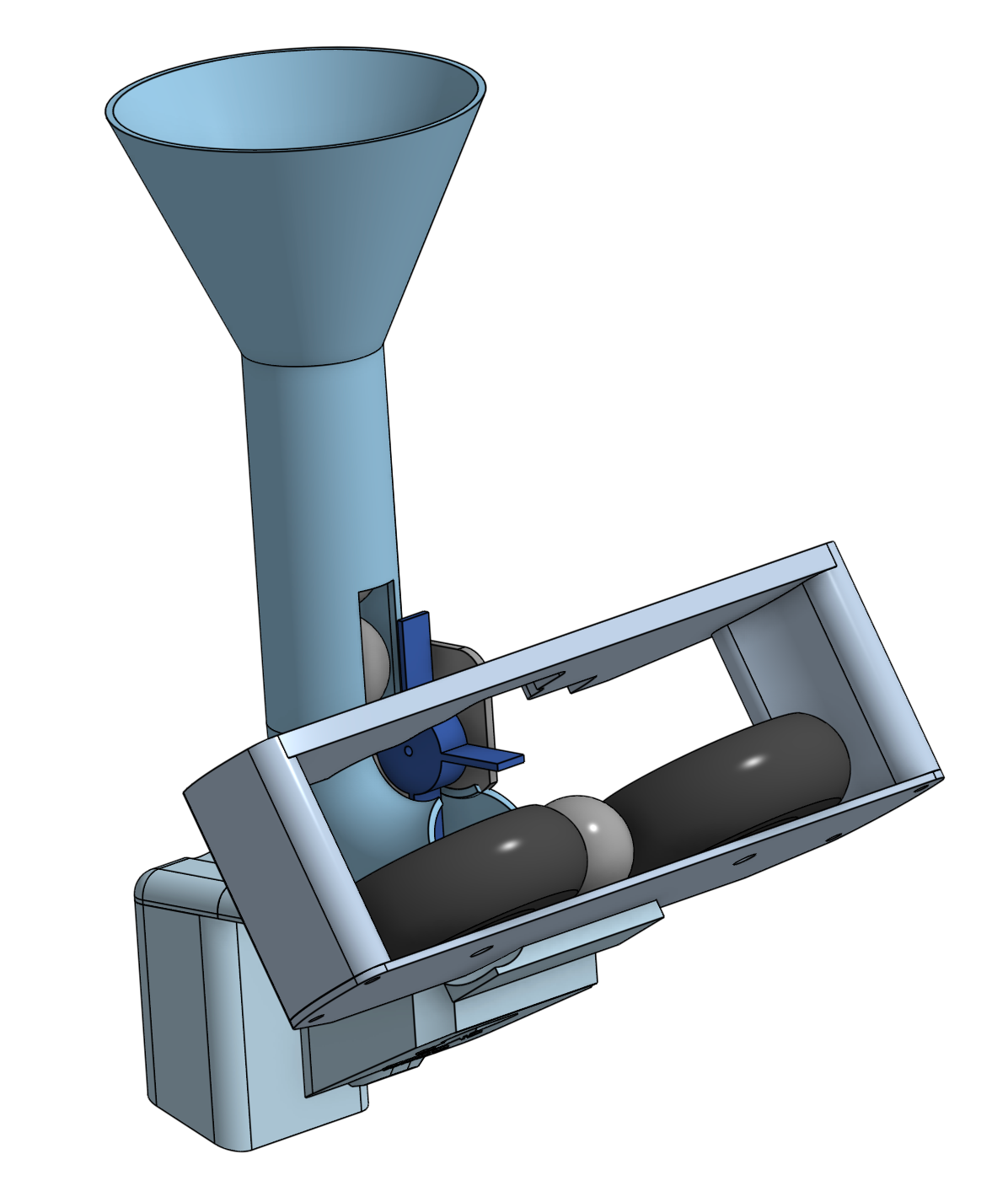

we went through three launcher iterations before deciding on a flywheel design based around Nerf's 0.8" Rival foam balls. due to budget constraints (we were broke), we kept supplies to an absolute minimum: 2 DC motors, a feeding servo, and foam model airplane wheels.

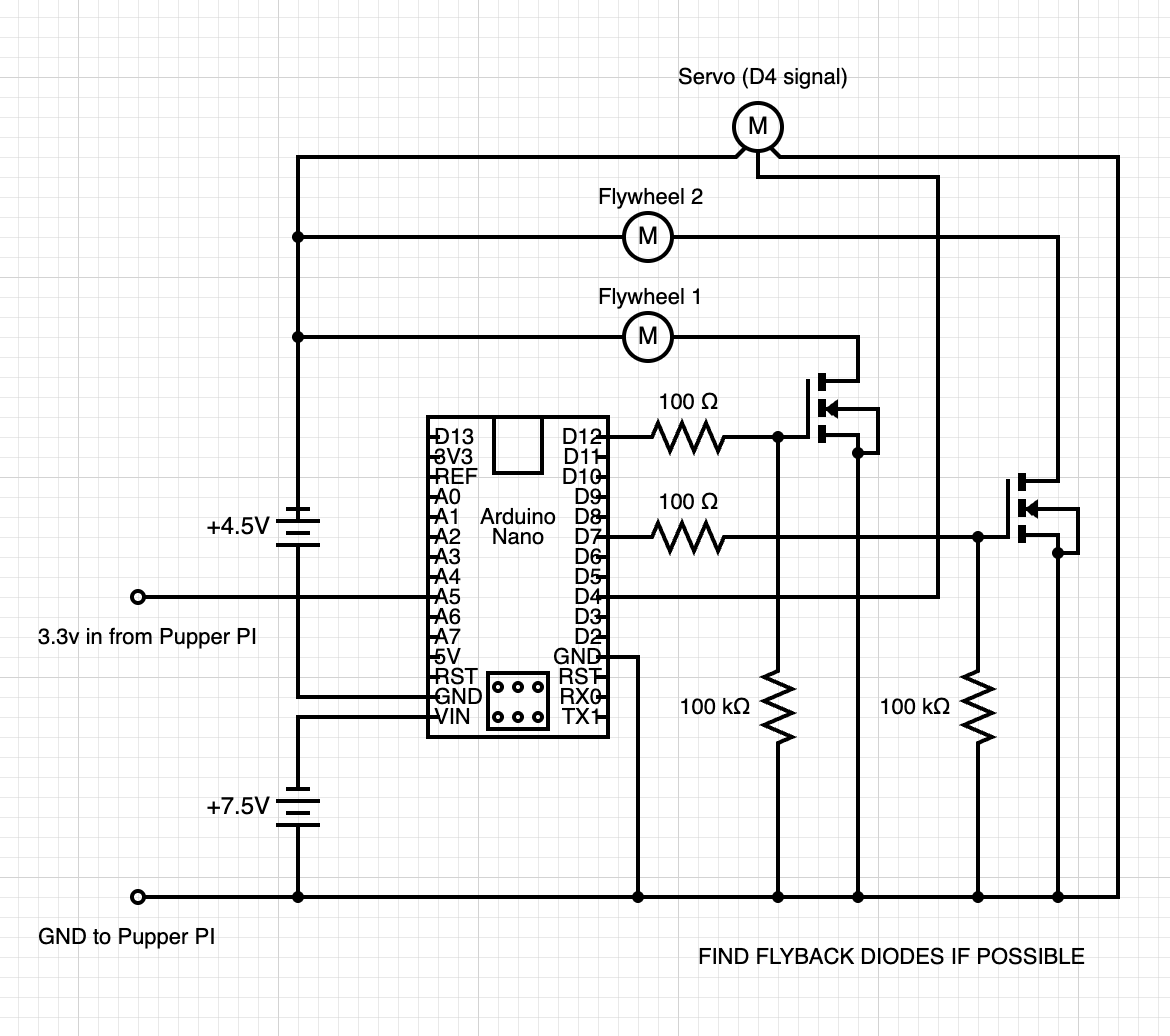

we also raided half the makerspaces on campus for electronic parts and wiring. we didn't have any boost converters to amplify our 3.3v input signal (our transistors required driving 5v to the gate), but an Arduino Nano ended up doing the trick:

there were a few important design choices we had to consider here. first, we had to wire everything without flyback diodes - at the time we were wiring our launcher, no makerspace had any compatible diodes available (you can see our crashout about this on our diagram). second, we had to deal with floating gate voltages that caused our launcher to behave erratically. this was an easier fix, albeit a lesson in the importance of pulldown resistors.

somewhere along the way, our goal shifted from shooting into a hoop to shooting at a target. to do this, we used the COCO dataset for YOLO object detection. since no library existed for 6DOF motion yet, we also had to train a 500,000,000 timestep RL policy to pitch airBUD to a desired angle.

it also helped that our launcher packed a solid punch:

the time eventually came to demo. initially, we thought we'd show airBUD to a few students and researchers. instead, with the NBA on NBC theme playing in the background, we fired foam balls at the head of Tesla autopilot, Stuart Bowers, Deepmind researcher Jie Tan, robotics prof. Karen Liu, and 35 surprisingly excited elementary schoolers.

this project was a continuation of the CS107E PI, built from scratch in RISC-V assembly and C using an OS-wiped MangoPI SoC.

you know that moment when you go to the doctor's office and they strap you up to 5 different machines to take your vitals? this project was an attempt to condense that whole process into a single step.

the final form factor we landed on was utterly insane: 3d-print a giant hollow hand and stuff it full of sensors. in theory, all your vitals could be read with a single awkwardly long handshake.

none of us had any idea what we were doing. we had never worked with raw sensor data, let alone serial communication with a nonstandard (RISC-V) board.

in retrospect, the process taught us foundational principles of working with embedded systems: always read every datasheet twice, have as much observability over communication as possible, and make sure to piecemeal-test physical systems first. in the end, we managed to get reliable signals out of our pulse, temperature, and oximeter sensors:

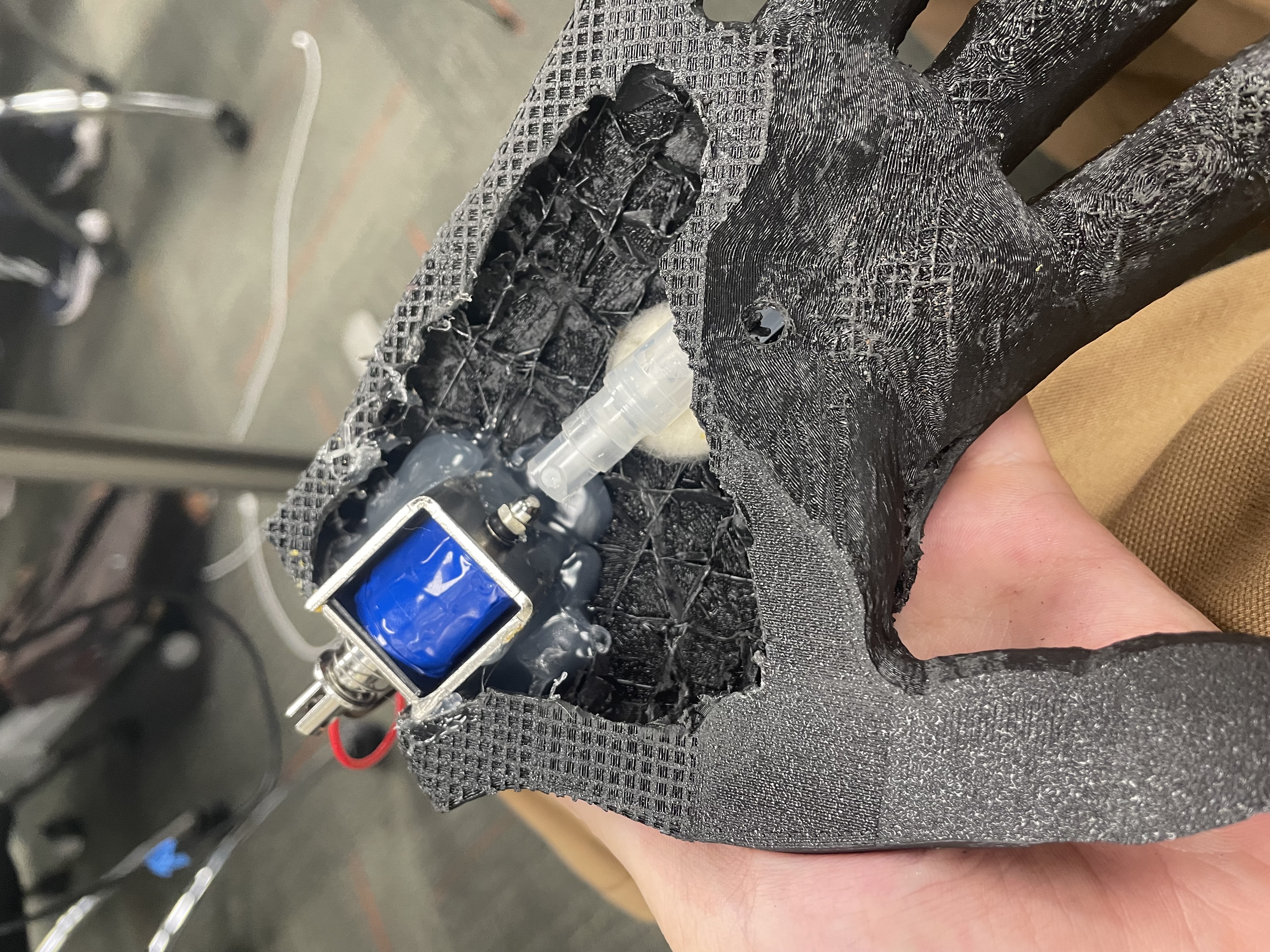

for good measure, we also wanted to make our hand self-sanitizing. this was done by dremeling out a pocket to hold a vial of liquid sanitizer and a solenoid to spray it at each handshake's falling edge:

the only thing we had left to tackle was graphics, which we were also planning to design from scratch. since our pulse sensor wasn't variable, we couldn't display the raw ECG data. but, we came up with a cheeky solution: display a pulse graphic on each rising edge.

in the end, our system was far from practical, but we learned a ton about system design, hardware-software integration, and the realities of building messy physical things from scratch.